Solving the Distributed Cache Invalidation Problem with Redis and HybridCache

Source: Milan Jovanović - Solving the Distributed Cache Invalidation Problem with Redis and HybridCache

Date Saved: 2026-02-08

📝 內容摘要

本文探討了在分散式系統中,.NET 9 新引入的 HybridCache 面臨的快取同步挑戰。雖然 HybridCache 結合了 L1(內存)與 L2(如 Redis)快取,但在多節點部署下,當某個節點更新資料時,其他節點的 L1 快取不會自動失效。文章介紹了如何利用 Redis 的 Pub/Sub 機制建立通訊背板(Backplane),實現跨節點的即時快取失效通知。

💡 關鍵重點

- HybridCache 限制:L1 快取在不同應用程式執行個體間是獨立的,缺乏內建的自動同步失效機制。

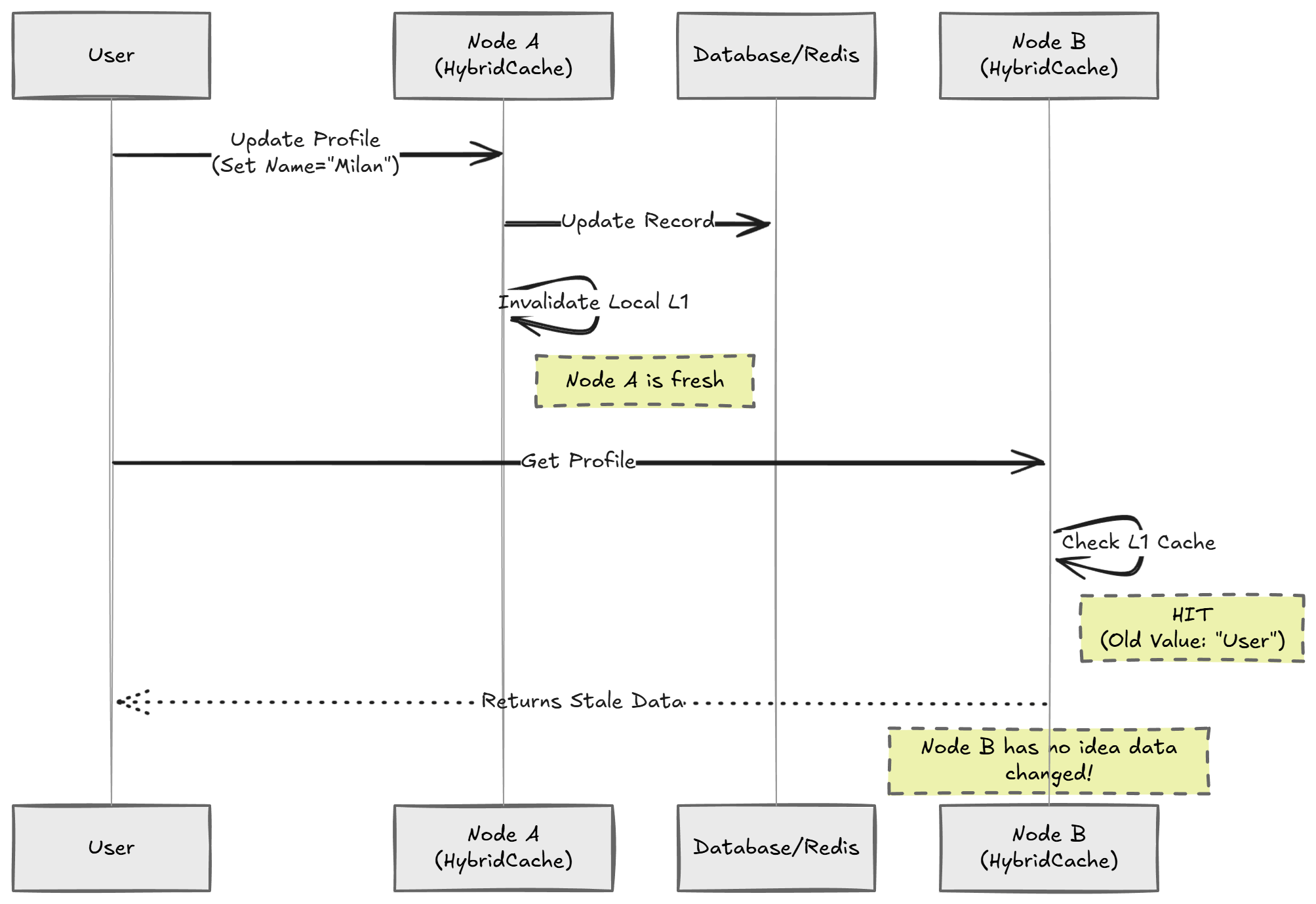

- 快取不一致場景:詳細描述了在負載均衡器後方,不同伺服器間資料不一致的失效情境。

- Redis Pub/Sub 背板:使用 Redis 頻道作為訊息中轉站。當某一節點刪除快取時,發布一條訊息,所有訂閱該頻道的節點收到後即同步移除本地 L1 快取。

- 實作邏輯:包含

ICacheInvalidator發布介面與BackgroundService訂閱監聽器的完整 C# 程式碼實作。 - 優選方案 FusionCache:推薦使用已成熟實作此功能的第三方庫 FusionCache,它能與 HybridCache 無縫集成,提供更強大的保護功能(如 Cache Stampede 預防)。

🚀 Overview

Distributed systems are great for scalability, but they introduce a whole new class of problems. One of the hardest problems to solve is cache invalidation.

In .NET 9, Microsoft introduced HybridCache to simplify caching. It combines the speed of in-memory caching (L1) with the durability of distributed caching (L2) like Redis.

However, when you run multiple instances of your application, HybridCache doesn't automatically synchronize the local L1 cache across all nodes. If you update data on Node A, Node B will continue serving stale data from its in-memory cache until the entry expires.

📉 The Distributed Caching Dilemma

In a typical production scenario with multiple servers behind a load balancer:

- User A updates profile on Server 1.

- Server 1 updates database and clears its local cache.

- User A hits Server 2.

- Server 2 still holds old data in its local HybridCache.

- The user sees outdated information.

Why Not Just Shorten TTL?

Reducing TTL (e.g., to 10s) masks the problem but introduces:

- Increased Latency: More frequent requests to Redis or the DB.

- Lost Efficiency: L1 caching's main benefit is avoiding network requests entirely.

💡 The Solution: Redis Pub/Sub Backplane

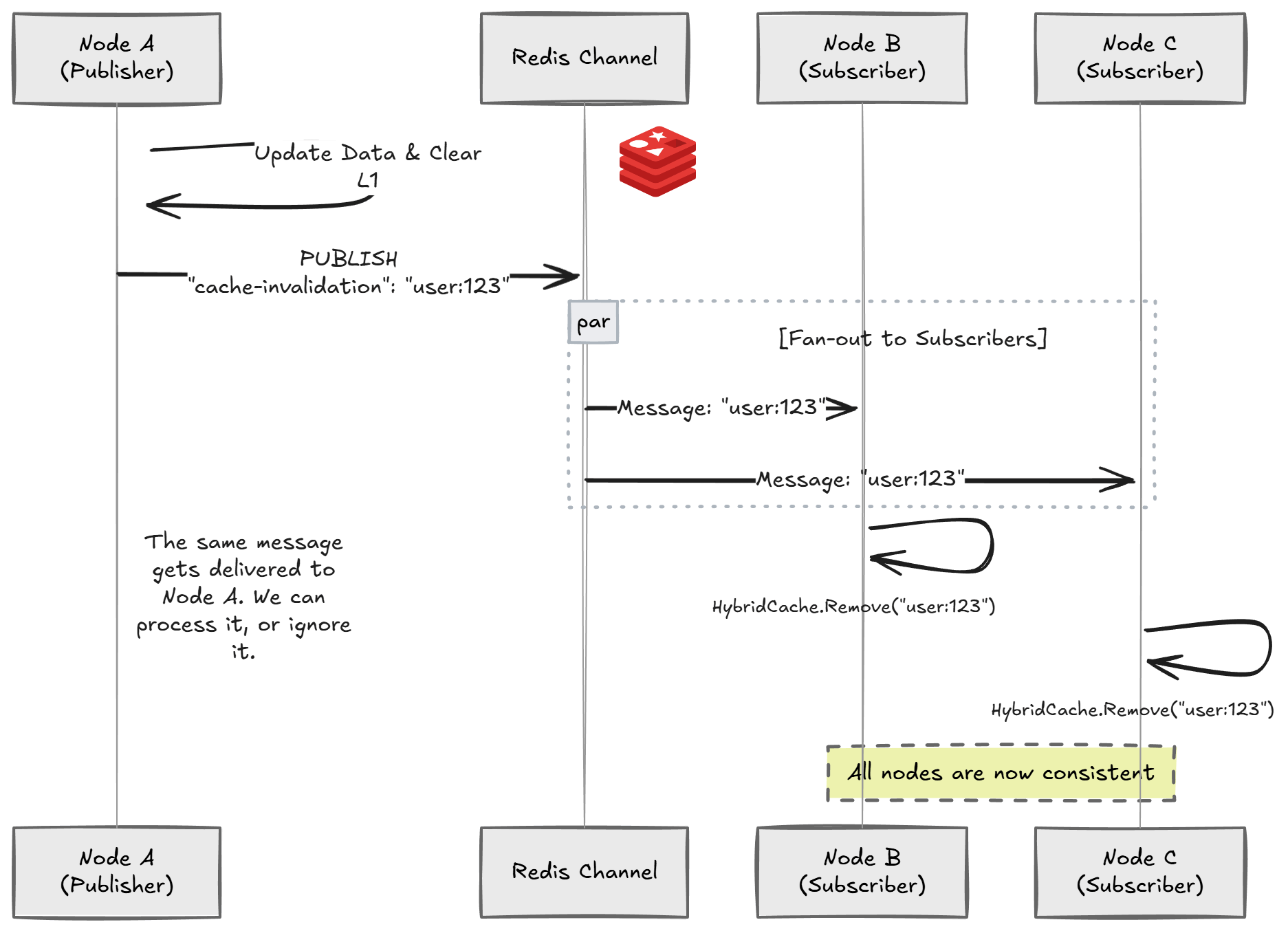

To solve this, we need a backplane—a communication channel connecting all nodes. When a cache entry is removed on one node, it publishes a message to the backplane. All other nodes subscribe and remove the corresponding key from their local L1 cache.

🛠️ Implementing the Solution

1. Invalidation Service

Define a service to handle publishing invalidation messages.

public interface ICacheInvalidator

{

Task InvalidateAsync(string key, CancellationToken ct = default);

}

public class RedisCacheInvalidator(IConnectionMultiplexer connectionMultiplexer) : ICacheInvalidator

{

private const RedisChannel Channel = RedisChannel.Literal("cache-invalidation");

public async Task InvalidateAsync(string key, CancellationToken ct = default)

{

var subscriber = connectionMultiplexer.GetSubscriber();

await subscriber.PublishAsync(Channel, new RedisValue(key));

}

}

2. Background Listener

A background service running on every node to listen for invalidation messages.

public class CacheInvalidationService(

IConnectionMultiplexer connectionMultiplexer,

HybridCache hybridCache) : BackgroundService

{

private const RedisChannel Channel = RedisChannel.Literal("cache-invalidation");

protected override async Task ExecuteAsync(CancellationToken stoppingToken)

{

var subscriber = connectionMultiplexer.GetSubscriber();

await subscriber.SubscribeAsync(Channel, (channel, value) =>

{

string key = value.ToString();

// Evict from local L1 cache

hybridCache.RemoveAsync(key, stoppingToken);

});

}

}

🌟 A Better Way: FusionCache

If you don't want to build your own, FusionCache is a mature library that has solved this for years. It now includes a HybridCache implementation.

builder.Services.AddFusionCache()

.WithBackplane(new RedisBackplane(new RedisBackplaneOptions

{

Configuration = "<REDIS_CONNECTION_STRING>"

}))

.AsHybridCache();